To understand LLMs, we need to start with the fundamentals: Machine Learning, Deep Learning, and a remarkable Deep Learning architecture called a transformer.

Understanding Machine Learning

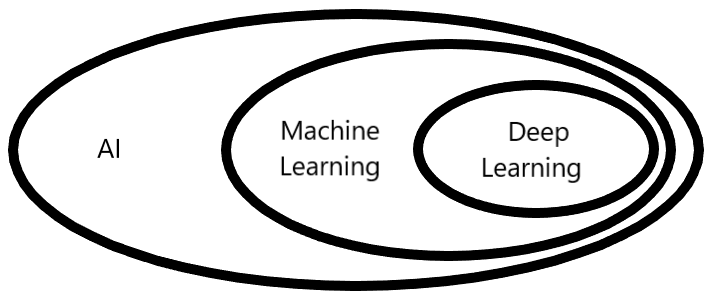

Machine learning is a specialized branch of AI (Artificial Intelligence). Machine learning focuses on getting systems to learn from data. The systems are not told how to learn; they are shown lots and lots of data (billions or trillions of words from books, articles, and other text sources).

Understanding Deep Learning and Neural Networks

Deep learning is a subfield of Machine Learning. This is a specific type of machine learning that uses neural networks to identify patterns in the data. A neural network is a set of interconnected nodes organized in layers: an input layer, a lot of middle layers, and an output layer. Each node is a mathematical function and the output from one node is input into the next node. The entire neural network is called a model, and an LLM is built on a special type of neural network architecture called a transformer.

An LLM is a deep learning model trained on a massive dataset to understand and generate text, images, music, etc., depending on the source of data. An LLM is trained on one or more of these specific data sets. For example, ChatGPT-4o was trained on text, images, audio, and video, making it a multimodal model (meaning it can process multiple types of data). Basically, an LLM is a statistical machine that repeatedly predicts the next word. LLMs technically forecast the next token, which might be words or parts of words. I will use "token" from now on. Image and music generation LLMs use similar processes but different architectures. This article focuses on text-trained models.

Why has AI, specifically LLMs, exploded into the public sphere?

Up to 2017, deep learning neural networks (models) evaluated words one at a time. In 2017, a team at Google released a paper about transformers. A transformer is a special neural network that can process all the text in parallel (something GPUs excel at). The nitty-gritty of transformers is that each word is associated with a matrix of numbers. The numbers encode the meaning of the word.

Transformers have a technique called attention. It allows matrices to communicate with one another and refine the meaning of words based on their surrounding context. So the matrix for the word "jump" will change to fit the context:

"We jump rope" versus "I need to jump the battery."

The matrix for "jump" will change for each context.

Transformers have another operation, called a feedforward neural network, which allows the LLM to store more words it learned during training.

The LLM iterates between these two operations —attention and the feedforward neural network — over and over again, so the word matrices become "enriched." Enriched means the matrices contain richer contextual information, capturing the subtle relationships between words. Here is a user prompt:

"It was a dark and stormy ___________."

The LLM will predict the next token. Each possibility has a percentage indicating how likely that token will be correct for the sequence.

Options:

- night 78%

- evening 12%

- afternoon 5%

- day 3%

So the most likely token for the prompt will be "night:" "It was a dark and stormy night." But sometimes the LLM will choose another token.

Why do LLMs produce different content for the same prompts?

The LLMs exhibit "emergent behavior" due to the transformer's two operations: attention and a feedforward neural network. It will choose a different token on occasion to seem more human. Why is this interesting? The LLM was trained on specific data, but this emergent behavior allows LLMs to choose between tokens, which is how we get different outputs despite asking the same question. This emergent behavior creates a challenge called the "black box problem" — we can see the inputs and outputs, but can't fully explain the reasoning process in between.

Conclusion

In a nutshell, an LLM is a sophisticated mathematical function that ranks tokens and typically selects the ones with the highest probability. An LLM is a powerful tool, but you will get better use out of an LLM if you treat it as a teammate rather than a tool. We use a hammer to hammer nails or remove nails. It is a one-and-done operation as befitting a tool. A one-and-done mindset with an LLM is not as helpful. When you use an LLM, iteratively provide feedback to refine the project you're working on. I guarantee you will have a more productive session with the LLM.

Collapse ↑